-

Event access management Power App with external barcode scanning for passes

I had a request from a customer who is running a 10-day event where attendees are permitted in certain areas. There will be in excess of 500 attendees who will all be issued passes that will indicate which area they are permitted in and the pass could include a QR code. Each of the entrances…

-

Smart dog training buttons

At first, it seemed a silly idea to create buttons our new puppy could press to tell us she wanted something. A few weeks later, after introducing these to our new 8-week old puppy Poppy, it seems the idea was far from silly. Poppy has started to learn that something happens when she presses these…

-

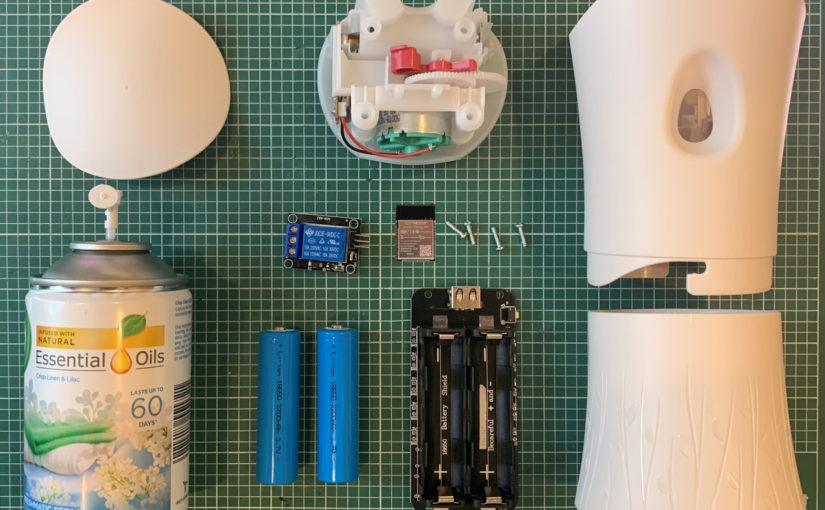

Can you IoT an Airwick air freshener?

This project is one that makes me feel on top of the world but also possibly one of my most craziest IoT projects. I am fascinated with IoT and the point where software interacts with my physical world for example in the simplest form, a sensor programmed to turn a light bulb on when there…

-

Turn lights on and off when Windows 10 is locked or unlocked

Like many, I have smart lighting throughout my house. This includes my office and desk. Behind my beautiful ultrawide monitor, I have a 3m Philips Hue strip which helps add some peripheral light and softens the glare when using the screen. I use scenes to adjust the level of white light towards early evening, but…

-

Delicate mushroom risotto

I’ve always been fond of risotto and during a work trip in Rotterdam, Netherlands this was reconfirmed when I had what was the most incredibly delicious mushroom risotto. Since then I have been just dying to cook it. But to cook it like the dish I had in the Netherlands I’ve had to source some…

-

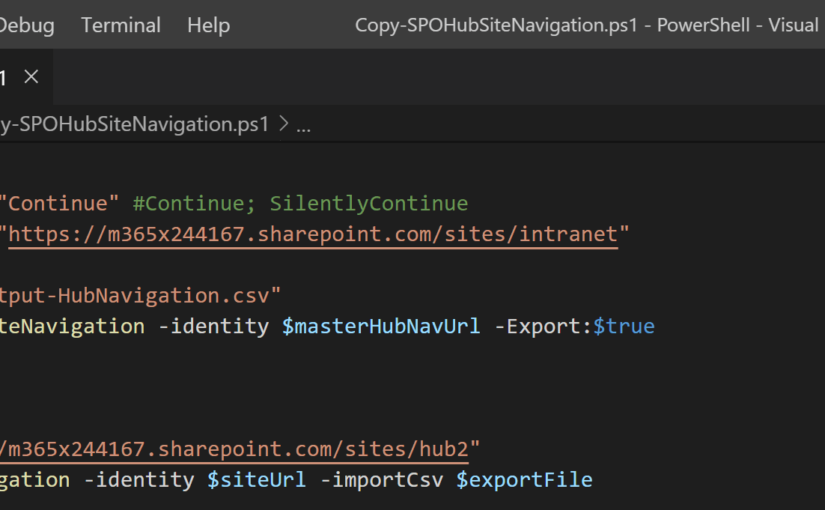

Gist extension for VS Code

Gist is a big part of my workflow. I’ve always been on the lookout for a desktop tool. Well, it turns out I was looking in the wrong place. A few days I discovered two VS Code extensions Gist and GitHub Gist Explorer. They both really great extensions and so far I’m really enjoying having…

-

Little Morton Hall

Visited Little Morton Hall, another National Trust property this weekend. This is a very wonky Tudor property that has been left entirely in its original state. The great gallery has some claims to tennis. I have shared a few more photos that are available on 500px here. You can browse my published portfolio over at 500px. Be…

-

Rutting Deer @ Tatton Park

I recently visited Tatton Park in Knutsford where the deer were rutting. This is a beautiful park to walk and plenty to do for kids too! This was my first time photographing deer this close and they gave me plenty of opportunities. I have shared a few more photos that are available on 500px. This…

-

Granada, Spain and Gibraltar

A recent trip to Granada, Spain filled me with joy after an absolutely stunning guided tour around the Alhambra. I also found a little gem of a steak restaurant called Apo restaurante on Plaza de San Lázaro 15. The trip finished with a road trip over to Gibraltar and Seville. The views of Africa from the…

-

Rufford Old Hall

A beautiful day to visit this Rufford Old Hall, a National Trust property. The grounds are lovely to walk as is the canal which is only a few minutes away. I have shared a few more photos that are available on 500px. You can browse my published portfolio over at 500px. Be sure to share your feedback.